IEC 62304 in Practice: Medical Device Software Without the Waterfall

By Jason Brumwell

Most teams hear "IEC 62304" and picture waterfall. Requirements phase, design phase, implementation phase, testing phase, all marching forward in sequence with sign-offs between each gate. That picture is wrong. IEC 62304:2006+AMD1:2015 is methodology-agnostic. Table B.1 in Appendix B explicitly shows incremental and evolutionary (agile) strategies as valid approaches. The standard cares that lifecycle tasks are completed and captured. It does not care whether you complete them in sprints or phases.

For ContourCompanion, we ran two-week sprints against a Class C software safety classification, the highest tier. Death or serious injury is possible if the software fails. That classification shaped the entire project: documentation scope, test coverage, codebase structure, third-party library management. The documentation was lean, but fully traced. Requirements linked to risk controls. Tests linked to requirements.

What IEC 62304 Actually Requires

IEC 62304 defines software lifecycle processes for medical devices. The FDA recognizes it as a consensus standard, meaning compliance with IEC 62304 creates a presumption of conformity with the FDA's software lifecycle expectations. It's not the only way to satisfy the FDA, but it's the most efficient way to avoid arguments during review. (For context on which FDA pathway drives your evidence requirements, see FDA Pathways for SaMD.)

The standard defines twelve processes covering planning, requirements, architecture, detailed design, implementation, verification, integration testing, system testing, release, maintenance, risk management, and configuration management. You can read the full list on the ISO catalog page. What matters for implementation is that not all of them apply equally: processes like detailed design (5.4) and unit verification (5.5) only kick in at higher safety classifications. Class A software skips them entirely. Class C requires all of it. That classification decision, covered in the next section, is the single biggest driver of your documentation burden.

The FDA's June 2023 software guidance replaced the old three-tier "Level of Concern" system with two documentation tiers: Basic and Enhanced. Enhanced documentation is required when software failure could cause death or serious injury, which maps directly to IEC 62304 Class C. If you're Class C under IEC 62304, you're producing Enhanced documentation for the FDA. The two frameworks reinforce each other.

Software Safety Classification: The Decision That Shapes Everything

IEC 62304 classifies software into three safety classes based on the severity of harm if the software fails:

| Class | Risk Level | What It Means | Documentation Required |

|---|---|---|---|

| A | No injury possible | Software failure cannot contribute to a hazardous situation | Requirements, system testing, release docs |

| B | Non-serious injury | Software can contribute to a hazardous situation, but not death or serious injury | + Architecture docs, integration testing, verification |

| C | Death or serious injury | Software can contribute to death or serious injury | + Detailed design, unit verification, code review, full traceability |

ContourCompanion was Class C. As covered in What Is SaMD?, the software generates OAR contours that feed directly into radiation treatment planning systems. If a contour is wrong, the treatment plan routes radiation through healthy tissue. That's a severity S5 hazard under ISO 14971: death or serious disablement.

Here's the nuance that trips teams up: the classification applies to the software system, but IEC 62304 allows you to decompose the system into software items and classify them independently. A Class C system can contain Class A and Class B items if you can demonstrate through your risk analysis that failure of those specific items cannot contribute to a hazardous situation. For ContourCompanion, the DICOM parsing and routing components were Class A (failure means the contour doesn't arrive, not that it arrives wrong). The inference engine and contour generation pipeline were Class C. This decomposition reduced the documentation burden on roughly 40% of the codebase without reducing safety rigor where it mattered.

The takeaway: classify at the software item level, not the system level. A Class C system with proper architectural decomposition produces significantly less documentation than one treated as a monolith.

How We Ran Agile Under Class C

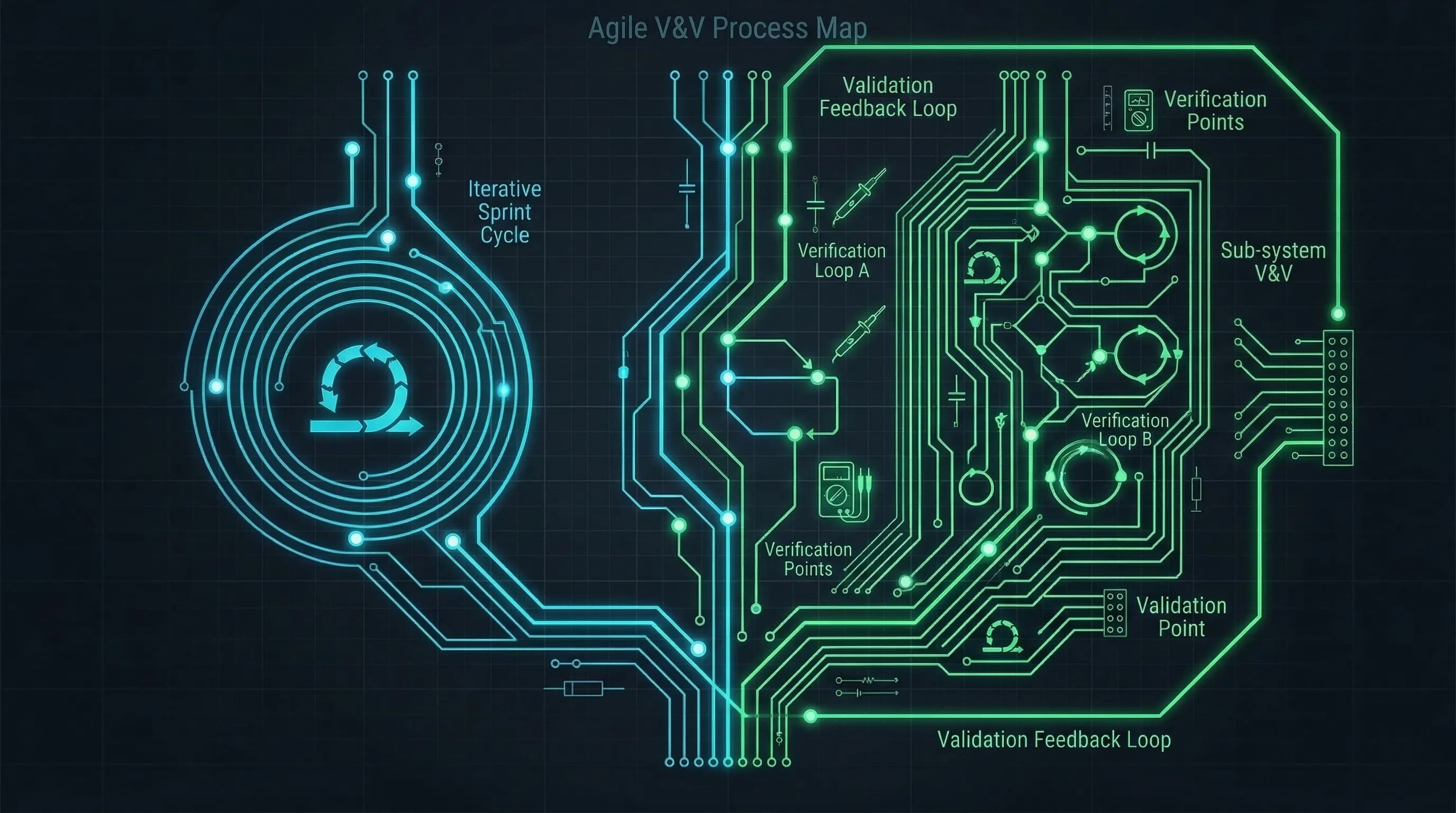

The AAMI TIR45:2012+A1:2018 technical information report provides the bridge between agile and IEC 62304. The core idea: the V-model doesn't run once as a waterfall. It runs inside every sprint. Each sprint has its own mini-V: requirements refinement at the top, implementation at the bottom, verification climbing back up. System testing and formal design reviews happen at release milestones. Risk management and configuration management run continuously underneath.

To make this concrete, here's what a real user story looked like for a Class C item in ContourCompanion:

Story: As a radiation oncologist, I need the autocontouring system to generate an organ-at-risk contour that the treatment planning system can load as an RT Structure Set.

Acceptance criteria (design inputs): Contour must be a valid DICOM RT Structure Set per PS3.3 Annex C. Contour must reference the correct CT frame of reference UID. Structure labels must conform to the configured naming convention. System must return an error if the input CT series is incomplete (missing slices).

Risk-derived requirement: If a contour fails to generate, the system must not silently omit it from the RT Structure Set. Failure must be surfaced to the user with the specific structure identified. (Traced to a hazard in the risk management file: missing OAR contour leads to unprotected organ during treatment.)

That story pulls triple duty. The acceptance criteria drove the unit tests. The risk-derived requirement came straight from the ISO 14971 hazard analysis. When the sprint was done, the traceability chain was already built: requirement → implementation (PR) → test → risk control. No separate documentation phase needed.

The Software Development Plan defined this structure upfront. Two-week sprints. Sprint reviews served as informal design reviews. Formal design reviews happened at three milestones: architecture complete, feature freeze, and pre-release. The plan specified which V-model activities happened every sprint (requirements refinement, detailed design for Class C items, unit verification, integration testing, risk assessment updates) versus which happened only at milestones (system testing, release documentation, clinical validation).

A Class A item in the same sprint looked different. The DICOM routing service, for example, was Class A. A failure there means the contour never arrives at the treatment planning system, but it can't cause a wrong contour to arrive. No detailed design document. No mandatory unit testing (though we wrote tests anyway because they're useful). Requirements, implementation, integration testing. The sprint backlog mixed Class A and Class C items, but the process rigor for each item scaled to its classification.

Traceability was enforced by tooling, not discipline. Requirements carried unique IDs. Test cases referenced the requirement they verified. PRs referenced the requirement they implemented. The traceability matrix was generated from these links, not maintained by hand. When FDA reviewers asked "show me the test that verifies requirement SRS-047," the answer was a query, not a document search.

Risk management ran continuously. Every sprint that touched a Class C software item included a risk review. New hazards identified during implementation were added to the risk management file within the same sprint. This is exactly what ISO 14971 expects, and it's much easier to do in small increments than as a massive pre-implementation exercise. By release time, the risk management file was already complete. Teams that defer risk management to a pre-submission phase end up reconstructing months of decisions from memory.

Unit Verification for Class C: What the Standard Actually Demands

IEC 62304 doesn't use the term "unit testing." It requires verification that software units meet predefined acceptance criteria. The distinction matters. For Class B, manufacturers can satisfy this with source code analysis alone (code review, static analysis). For Class C, you must actually execute the software unit and verify the results. No shortcuts.

The acceptance criteria for Class C go beyond "does this function return the expected output." You're verifying functional correctness against the detailed design, but also error handling, boundary conditions, memory usage, and performance. The acceptance criteria have to be specific enough to test against.

For ContourCompanion's contour generation pipeline, this translated to specific, measurable acceptance criteria in the detailed design:

- Contour accuracy: Each OAR contour generation function was tested against a set of reference CT volumes with expert-drawn ground truth contours. The acceptance criterion was geometric agreement within the tolerance specified by the clinical requirements.

- DICOM edge cases: What happens when a CT series has missing slices? When the slice spacing is irregular? When the pixel data is in an unexpected transfer syntax? Each of these scenarios had a defined expected behavior (graceful error, not silent failure), and each was a unit test.

- Memory bounds: The inference engine processing large CT volumes had to stay within a defined memory allocation. We tested this explicitly because an OOM crash during contour generation is a failure mode that the risk analysis flagged: the system goes silent and the clinician doesn't know whether the contour was generated or not.

- Performance: Contour generation for a full OAR set had to complete within the timeout window. A function that technically produces correct results but takes 45 minutes is a usability failure that affects clinical adoption.

These criteria were written into the detailed design document before implementation started. The tests were written alongside the code, not after. The CI pipeline executed them on every build and recorded the evidence: test name, version of code under test, timestamp, pass/fail, execution environment. That's the reproducibility evidence IEC 62304 requires. Generating it manually, which is what teams without CI automation do, is one of the biggest time sinks in regulated development.

SOUP Management: The Hidden Time Sink

SOUP (Software of Unknown Provenance) is IEC 62304's term for third-party software, open-source libraries, commercial components, anything not developed under your QMS. The FDA's equivalent term is OTS (Off-The-Shelf). Every SOUP item in your software inherits the safety classification of the system it's part of.

For a Class C SaMD running on AWS, the SOUP list is long: the ML framework, DICOM libraries, web frameworks, AWS SDKs, container base images, and every transitive dependency they pull in. IEC 62304 requires for each SOUP item:

- Documented selection rationale

- Risk assessment (what happens if this component fails?)

- Version pinning and change monitoring

- Verification that the component meets your requirements

We automated SOUP management through the SBOM pipeline. Every build generated a Software Bill of Materials listing every dependency with version, license, and known vulnerabilities. The SBOM was cross-referenced against the National Vulnerability Database on every build. New vulnerabilities flagged automatically. Components with no updates in 12+ months or declared end-of-life triggered a risk reassessment.

The SOUP list for ContourCompanion had over 200 entries when you count transitive dependencies. To put that in perspective: the DICOM library alone pulled in dozens of transitive dependencies, each of which is a SOUP item that inherits the Class C safety classification of the system. A CVE in any one of those dependencies triggers a risk reassessment. One quarter, a vulnerability was flagged in a transitive dependency three levels deep in the imaging stack. The automated pipeline caught it within hours of the NVD publication. A manual process would have missed it entirely until the next quarterly review, if then.

Nobody has the bandwidth to manage 200+ SOUP items by hand. The automated pipeline turned it into a build stage that added a few minutes per run.

The Documentation Set That Actually Gets Produced

For a Class C SaMD under IEC 62304, here's the documentation we maintained:

| Document | IEC 62304 Clause | Updated When |

|---|---|---|

| Software Development Plan | 5.1 | Project start, major methodology changes |

| Software Requirements Specification | 5.2 | Every sprint (living document) |

| Software Architecture Document | 5.3 | Architecture changes only |

| Software Detailed Design | 5.4 | When Class C items change |

| Unit Verification Records | 5.5 | Every CI build (automated) |

| Integration Test Records | 5.6 | Every CI build (automated) |

| System Test Records | 5.7 | Each release milestone |

| Software Release Record | 5.8 | Each release |

| Traceability Matrix | Cross-cutting | Auto-generated from linked artifacts |

| SOUP List + Risk Assessment | 8.x | Every build (SBOM), risk review per sprint |

| Software Problem Reports | 9.x | As issues arise |

| Software Change Requests | 8.x | Every PR for Class C items |

Notice what's not on this list: a 200-page validation protocol. A requirements freeze document. A phase gate approval form. IEC 62304 doesn't require any of those. Teams that produce them are following a process they inherited, not a process the standard demands.

The total documentation footprint was smaller than most enterprise projects we've seen. Nothing existed without a reason. The traceability chain was real, built from actual links between artifacts, not a matrix someone filled in before submission.

Over-Documentation Is as Dangerous as Under-Documentation

Teams that document everything "just in case" create two problems:

First, the documentation becomes unmaintainable. When you have 400 pages of requirements documentation for a 50,000-line codebase, nobody updates it when the code changes. It drifts. By the time you submit to the FDA, your documentation describes a system that no longer exists. FDA reviewers are trained to spot inconsistencies between your requirements specification and your test results. Drift is a finding.

Second, over-documentation obscures the safety-critical information. When the risk management file is buried in a mountain of process documents, the hazard analysis that FDA reviewers actually need to evaluate gets lost. Reviewers spend their time on the risk management file and the traceability chain from hazard to control to verification. Everything else is supporting evidence. Burying the signal in noise slows the review and increases the chance of Additional Information requests.

The right amount of documentation is the minimum needed to demonstrate that every safety-relevant requirement has been identified, implemented, verified, and traced to a risk control. For Class C items, that minimum is substantial. For Class A items in the same system, it's much lighter. Architectural decomposition is how you keep the total volume manageable.

Where the Current Standard Falls Short

IEC 62304 was written in 2006, amended in 2015. It shows its age in three specific places that affected how we built ContourCompanion.

Cloud deployment isn't addressed. The standard assumes software ships as a discrete release to a discrete environment. ContourCompanion runs on AWS with container orchestration, auto-scaling, and infrastructure defined in CDK. The concept of a "software release" in IEC 62304 Clause 5.8 maps awkwardly to a container image tagged and pushed to ECR. We defined our own release process that satisfied the clause requirements (release notes, known anomalies, verification evidence) but mapped to our actual deployment pipeline. Every team running cloud-native SaMD has to solve this translation problem, and there's no guidance in the standard for how to do it.

AI/ML model updates don't fit the change control model. When the underlying model is retrained on new data, is that a software change or a configuration change? IEC 62304 doesn't distinguish between the two. For ContourCompanion, we treated model retraining as a software change requiring the full change control process for Class C items: impact assessment, updated verification, regression testing, release documentation. That's the conservative interpretation, and it's what we recommended in the Algorithm Change Protocol. But it creates a heavy process burden for what might be a routine model improvement. The FDA's Predetermined Change Control Plan (PCCP) framework is starting to address this, but the underlying standard hasn't caught up.

The three-class system is too coarse for decomposed architectures. We spent significant effort on the architectural decomposition that let us classify 40% of the codebase as Class A. The standard supports this, but the classification criteria are vague. "Can the software item contribute to a hazardous situation?" requires a judgment call for every item, and auditors can disagree with your judgment. Clearer criteria for software item classification would reduce ambiguity and the associated audit risk.

Edition 2 is in development and reportedly addresses all three gaps: cloud architectures, AI/ML, and a simplified two-level rigor model replacing the three classes. Build to Edition 1.1 now, but design your processes to be methodology-agnostic. If your SDLC is already agile and your deployment is already automated, the migration will be straightforward.

Frequently Asked Questions

Does IEC 62304 require waterfall?

No. IEC 62304 is methodology-agnostic. Table B.1 in Appendix B explicitly lists incremental and evolutionary (agile) strategies as valid approaches. The standard requires that lifecycle tasks are completed and traced, not that they follow a specific sequence. AAMI TIR45 provides detailed guidance on mapping agile practices to IEC 62304 requirements.

What is the difference between IEC 62304 Class A, B, and C?

The three safety classes are based on the severity of harm if the software fails. Class A: no injury possible. Class B: non-serious injury possible. Class C: death or serious injury possible. The classification drives documentation requirements: Class A needs only requirements and system testing, while Class C requires detailed design, unit verification, code review, and full traceability. You can classify individual software items independently, so a Class C system can contain Class A components with reduced documentation.

Is IEC 62304 mandatory for FDA submissions?

IEC 62304 is not legally mandatory, but the FDA recognizes it as a consensus standard. Compliance creates a presumption of conformity with FDA software lifecycle expectations, which means fewer questions during review. The FDA's June 2023 software guidance aligns closely with IEC 62304, and most SaMD manufacturers follow it as the path of least resistance.

What is SOUP in IEC 62304?

SOUP (Software of Unknown Provenance) is IEC 62304's term for third-party software components, including open-source libraries, commercial SDKs, and container base images. Every SOUP item inherits the safety classification of the system it's part of and requires documented selection rationale, risk assessment, version pinning, and change monitoring. For a Class C SaMD with 200+ transitive dependencies, automated SBOM pipelines are the only practical way to manage SOUP compliance.

References

- IEC 62304:2006+AMD1:2015 — Medical Device Software Lifecycle Processes

- AAMI TIR45:2012+A1:2018 — Guidance on Agile Practices in Medical Device Software

- 21 CFR Part 820 — Quality System Regulation

- ISO 14971:2019 — Risk Management for Medical Devices

- FDA: Content of Premarket Submissions for Device Software Functions (June 2023)

- FDA Recognized Consensus Standards

- National Vulnerability Database

SaMD Engineering

Part 3 of 11

- 1.What Is SaMD? Software as a Medical Device Explained

- 2.FDA Pathways for SaMD: 510(k) vs De Novo vs PMA

- 3.IEC 62304 in Practice: Medical Device Software Without the Waterfall

- 4.ISO 14971 Risk Management for SaMD: What FDA Reviewers Read

- 5.HIPAA Compliant AWS Architecture for Medical Device Software

- 6.Infrastructure as Code for Medical Devices: IQ OQ PQ with AWS CDK

- 7.Medical Device Cybersecurity: FDA Guidance, SBOMs, and Threat Modeling

- 8.Design Controls for Medical Devices: 21 CFR 820.30 in Practice

- 9.AI/ML SaMD: FDA Artificial Intelligence Guidance in Practice

- 10.PCCP for AI SaMD: FDA Predetermined Change Control Plans

- 11.SaMD Engineering Toolchain: How Small Teams Ship FDA-Cleared Software